SafetyKit: Verified Review & AI Trust Profile

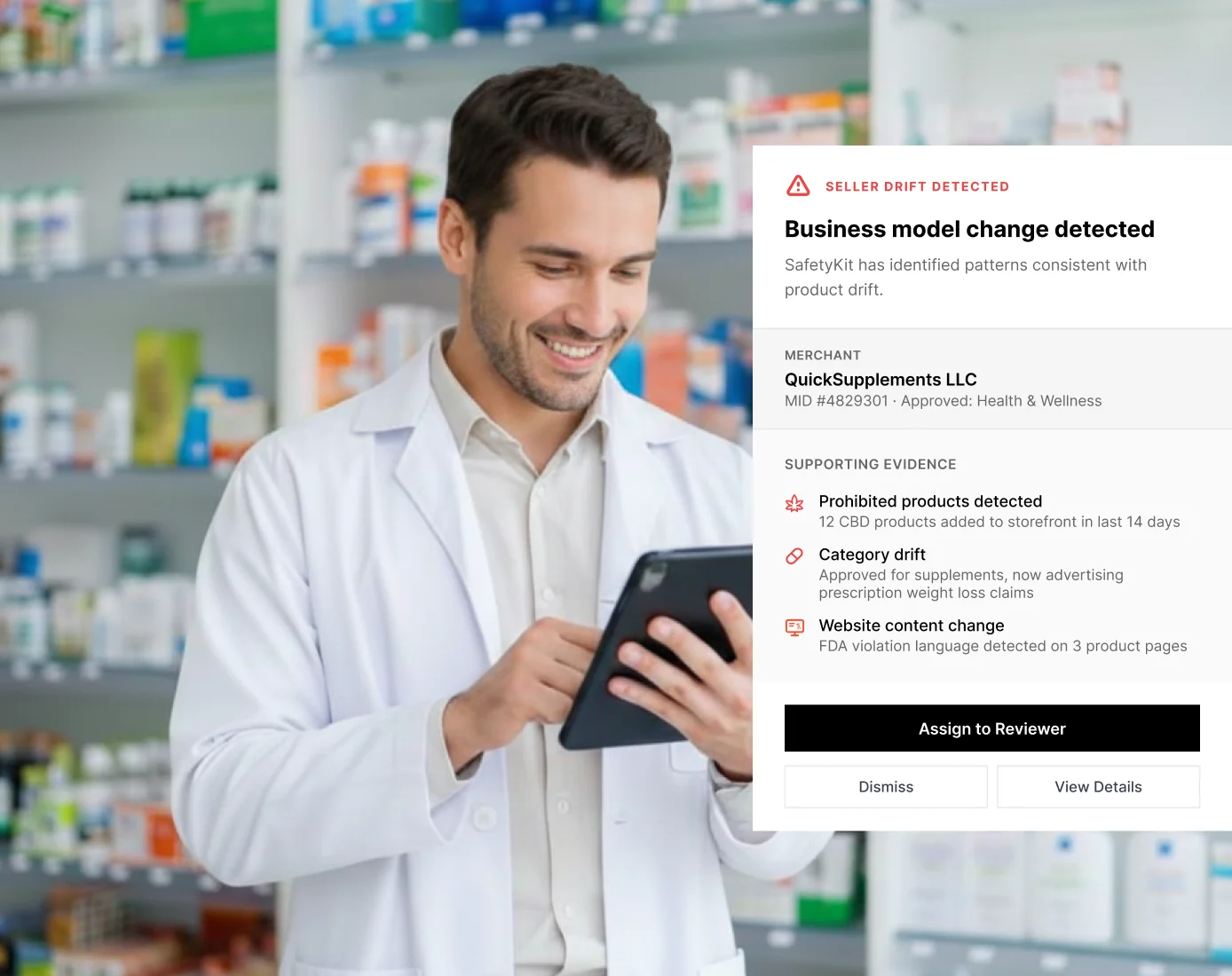

Deploy AI agents to automate risk reviews, onboarding, and investigations. Trusted by leading marketplaces and fintech platforms.

LLM Visibility Tester

Check if AI models can see, understand, and recommend your website before competitors own the answers.

Trust Score — Breakdown

SafetyKit Conversations, Questions and Answers

3 questions and answers about SafetyKit

QHow can AI agents help automate risk reviews and fraud detection in online marketplaces?

How can AI agents help automate risk reviews and fraud detection in online marketplaces?

AI agents can automate risk reviews and fraud detection in online marketplaces by using real-time machine learning and agentic AI to analyze transactions, user behavior, and content. These systems proactively identify suspicious activities, reduce false positives, and speed up decision-making processes. By integrating human intelligence with AI, platforms can efficiently mitigate risks such as fraud, abuse, and spam, improving overall security and operational efficiency. This automation also helps reduce costs and enhances the quality of marketplace experiences for both buyers and sellers.

QWhat are the benefits of using AI for content moderation in digital marketplaces?

What are the benefits of using AI for content moderation in digital marketplaces?

Using AI for content moderation in digital marketplaces offers several benefits, including the ability to enforce hundreds of policy areas and custom standard operating procedures efficiently. AI models can quickly analyze vast amounts of listings and user-generated content to detect violations such as spam, harassment, counterfeit products, and inappropriate media. This leads to faster deployment times, higher accuracy rates, and consistent enforcement of policies. Additionally, AI-driven moderation reduces the workload on human teams, allowing them to focus on complex cases while maintaining a safe and trustworthy environment for users.

QHow does AI-native case management improve investigation workflows in fraud detection?

How does AI-native case management improve investigation workflows in fraud detection?

AI-native case management enhances investigation workflows in fraud detection by automating the triage, investigation, and escalation of cases. Agentic AI workflows prioritize and handle routine tasks, allowing human investigators to focus on high-impact and complex cases. This automation accelerates the investigation process, increases accuracy, and ensures continuous monitoring around the clock. By integrating AI with human expertise, platforms can conduct deeper dives into merchant activities, listings, and networks more efficiently, resulting in faster takedowns of coordinated bad actors and improved overall risk mitigation.

Trusted By

EtsyKey client

EtsyKey client_logo%201.png) LimeKey client

LimeKey client UpworkKey client

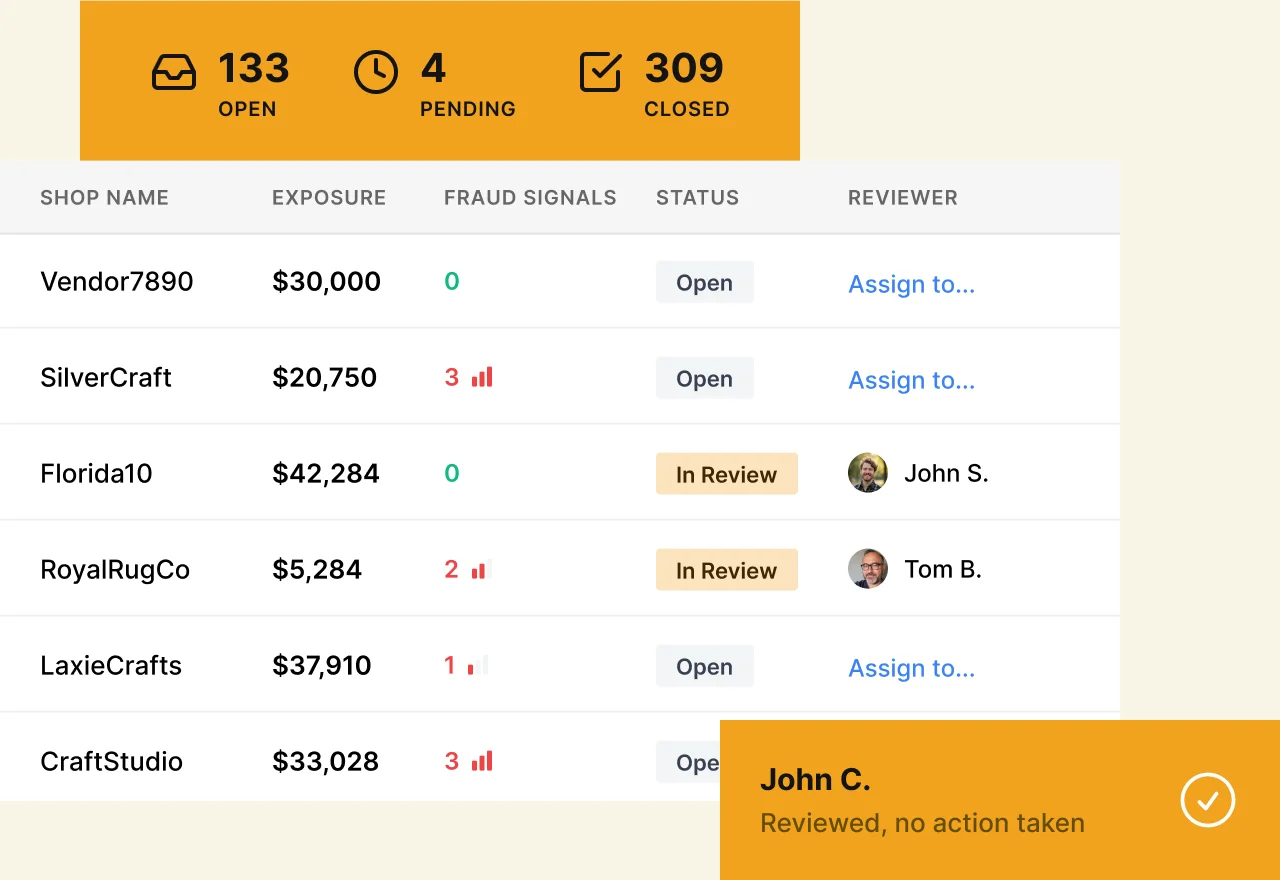

UpworkKey client Case management performance dashboard

Case management performance dashboard Character.ai

Character.ai Content moderation dashboard interface

Content moderation dashboard interface Discord

Discord Eventbrite

Eventbrite Faire

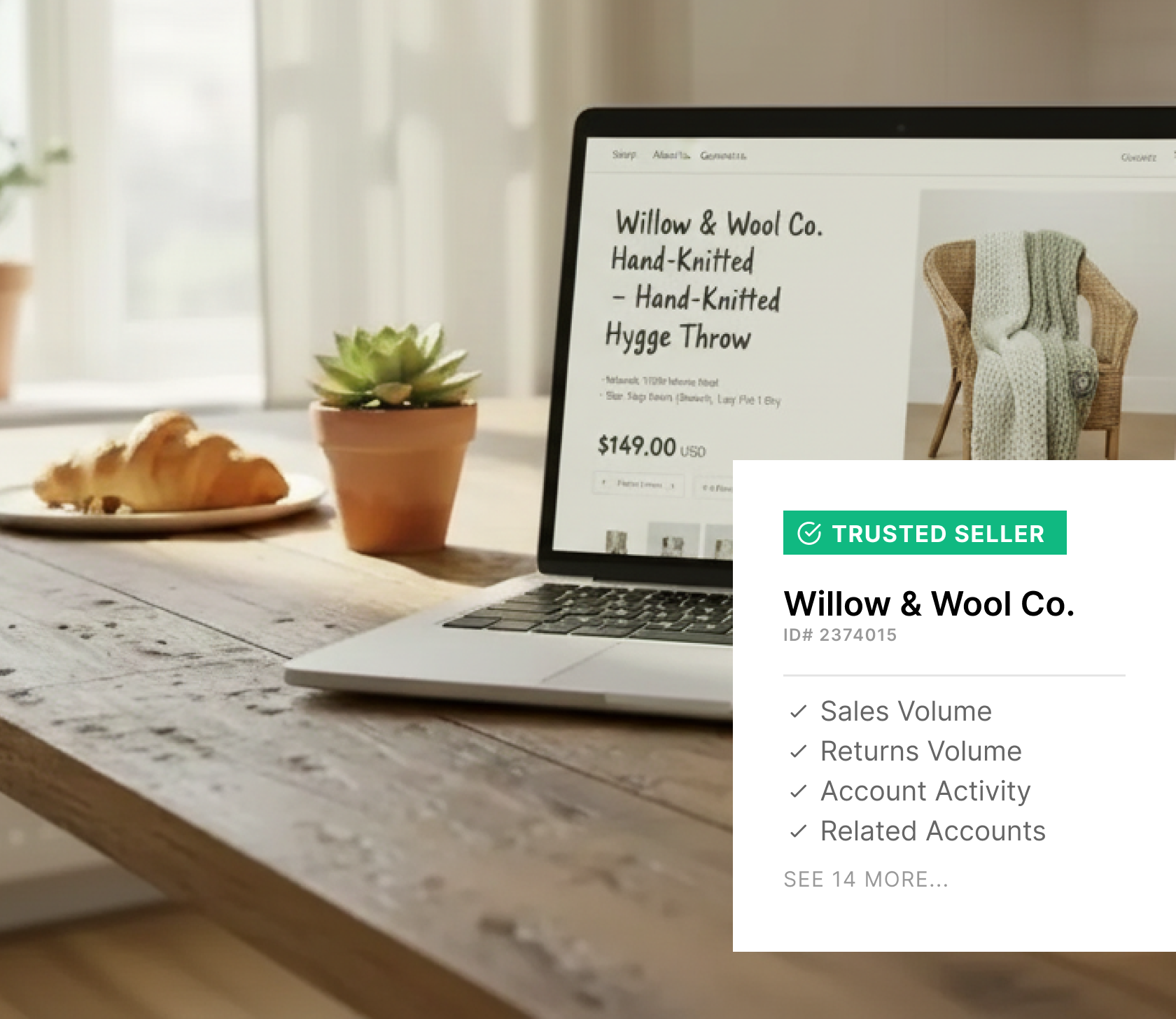

Faire Interface mockup illustrating faster risk decisions and fewer false positives

Interface mockup illustrating faster risk decisions and fewer false positives Kickstarter

Kickstarter Merchant investigations dashboard

Merchant investigations dashboard Patreon

Patreon Substack

SubstackServices

Content Moderation and Platform Security

Content & Platform Safety

View details →Fraud Prevention and Risk Management

Fraud and Risk Detection

View details →AI Trust Verification Report

Public validation record for SafetyKit — Evidence of machine-readability across 57 technical checks and 4 LLM visibility validations.

Evidence & Links

- Crawlability & Accessibility

- Structured Data & Entities

- Content Quality Signals

- Security & Trust Indicators

Verifiable Identity Links

Legal & Compliance

- Privacy Policy

- Compliance

Third-party Identity

- X (Twitter)

Do These LLMs Know This Website?

LLM "knowledge" is not binary. Some answers come from training data, others from retrieval/browsing, and results vary by prompt, language, and time. Our checks measure whether the model can correctly identify and describe the site for relevant prompts.

| LLM Platform | Recognition Status | Visibility Check |

|---|---|---|

| Detected | Detected | |

| Detected | Detected | |

| Partial | Improve Gemini visibility by making core pages easy to crawl and easy to summarize: clear headings, FAQ sections, and structured data. Keep metadata (title/description) unique and aligned with the page content. Build consistent entity signals across your site and trusted third-party profiles. | |

| Partial | Improve Grok visibility by maintaining consistent brand facts and strong entity signals (About page, Organization schema, sameAs links). Keep key pages fast, crawlable, and direct in their answers. Regularly update important pages so AI systems have fresh, reliable information to cite. |

Detected

Detected

Improve Gemini visibility by making core pages easy to crawl and easy to summarize: clear headings, FAQ sections, and structured data. Keep metadata (title/description) unique and aligned with the page content. Build consistent entity signals across your site and trusted third-party profiles.

Improve Grok visibility by maintaining consistent brand facts and strong entity signals (About page, Organization schema, sameAs links). Keep key pages fast, crawlable, and direct in their answers. Regularly update important pages so AI systems have fresh, reliable information to cite.

Note: Model outputs can change over time as retrieval systems and model snapshots change. This report captures visibility signals at scan time.

What We Tested (57 Checks)

We evaluate categories that affect whether AI systems can safely fetch, interpret, and reuse information:

Crawlability & Accessibility

12Fetchable pages, indexable content, robots.txt compliance, crawler access for GPTBot, OAI-SearchBot, Google-Extended

Structured Data & Entity Clarity

11Schema.org markup, JSON-LD validity, Organization/Product entity resolution, knowledge panel alignment

Content Quality & Structure

10Answerable content structure, factual consistency, semantic HTML, E-E-A-T signals, citation-worthy data presence

Security & Trust Signals

8HTTPS enforcement, secure headers, privacy policy presence, author verification, transparency disclosures

Performance & UX

9Core Web Vitals, mobile rendering, JavaScript dependency minimal, reliable uptime signals

Readability Analysis

7Clear nomenclature matching user intent, disambiguation from similar brands, consistent naming across pages

22 AI Visibility Opportunities Detected

These technical gaps effectively "hide" SafetyKit from modern search engines and AI agents.

Top 3 Blockers

- !Canonical tags are used properlyUse canonical tags to define the preferred version of each page, especially when parameters, filters, or duplicate URLs exist. Canonicals prevent duplicate-content confusion and consolidate ranking signals. Verify canonical URLs return 200 status and point to the correct, indexable page.

- !LLM-crawlable llms.txtCreate an llms.txt file to guide AI crawlers to your most important, high-quality pages (docs, pricing, about, key guides). Keep it short, well-structured, and focused on authoritative URLs you want cited. Treat it as a curated “AI sitemap” that improves discovery and reduces the risk of crawlers prioritizing low-value pages.

- !Does page has transparent privacy & terms pages?Publish clear Privacy Policy and Terms pages and link them from the footer. Explain data collection, cookies, user rights, and how requests are handled (especially for regulated regions). These pages increase trust and legitimacy signals that support both SEO and AI-driven discovery.

Top 3 Quick Wins

- !List in public LLM indexes (e.g., Huggingface database, Poe Profiles)List your tools, datasets, docs, or brand pages on major AI/LLM discovery hubs where relevant (for example model/dataset repositories or app directories). These platforms add credibility signals (likes, forks, usage) and create additional crawlable references to your brand. Keep names, descriptions, and links consistent with your official website.

- !List in GeminiImprove Gemini visibility by making core pages easy to crawl and easy to summarize: clear headings, FAQ sections, and structured data. Keep metadata (title/description) unique and aligned with the page content. Build consistent entity signals across your site and trusted third-party profiles.

- !List in GrokImprove Grok visibility by maintaining consistent brand facts and strong entity signals (About page, Organization schema, sameAs links). Keep key pages fast, crawlable, and direct in their answers. Regularly update important pages so AI systems have fresh, reliable information to cite.

Claim this profile to instantly generate the code that makes your business machine-readable.

Embed Badge

VerifiedDisplay this AI Trust indicator on your website. Links back to this public verification URL.

<a href="https://bilarna.com/provider/safetykit" target="_blank" rel="nofollow noopener noreferrer" class="bilarna-trust-badge">

<img src="https://bilarna.com/badges/ai-trust-safetykit.svg"

alt="AI Trust Verified by Bilarna (35/57 checks)"

width="200" height="60" loading="lazy">

</a>Cite This Report

APA / MLAPaste-ready citation for articles, security pages, or compliance documentation.

Bilarna. "SafetyKit AI Trust & LLM Visibility Report." Bilarna AI Trust Index, Jan 23, 2026. https://bilarna.com/provider/safetykitWhat Verified Means

Verified means Bilarna's automated checks found enough consistent trust and machine-readability signals to treat the website as a dependable source for extraction and referencing. It is not a legal certification or an endorsement; it is a measurable snapshot of public signals at the time of scan.

Frequently Asked Questions

What does the AI Trust score for SafetyKit measure?

What does the AI Trust score for SafetyKit measure?

It summarizes crawlability, clarity, structured signals, and trust indicators that influence whether AI systems can reliably interpret and reference SafetyKit. The score aggregates 57 technical checks across six categories that affect how LLMs and search systems extract and validate information.

Does ChatGPT/Gemini/Perplexity know SafetyKit?

Does ChatGPT/Gemini/Perplexity know SafetyKit?

Sometimes, but not consistently: models may rely on training data, web retrieval, or both, and results vary by query and time. This report measures observable visibility and correctness signals rather than assuming permanent "knowledge." Our 4 LLM visibility checks confirm whether major platforms can correctly recognize and describe SafetyKit for relevant queries.

How often is this report updated?

How often is this report updated?

We rescan periodically and show the last updated date (currently Jan 23, 2026) so teams can validate freshness. Automated scans run bi-weekly, with manual validation of LLM visibility conducted monthly. Significant changes trigger intermediate updates.

Can I embed the AI Trust indicator on my site?

Can I embed the AI Trust indicator on my site?

Yes—use the badge embed code provided in the "Embed Badge" section above; it links back to this public verification URL so others can validate the indicator. The badge displays current verification status and updates automatically when the verification is refreshed.

Is this a certification or endorsement?

Is this a certification or endorsement?

No. It's an evidence-based, repeatable scan of public signals that affect AI and search interpretability. "Verified" status indicates sufficient technical signals for machine readability, not business quality, legal compliance, or product efficacy. It represents a snapshot of technical accessibility at scan time.

Unlock the full AI visibility report

Chat with Bilarna AI to clarify your needs and get a precise quote from SafetyKit or top-rated experts instantly.