DeepSource: Verified Review & AI Trust Profile

DeepSource is the only all-in-one platform for SAST, static analysis, SCA, and code coverage that is purpose-built for developers.

LLM Visibility Tester

Check if AI models can see, understand, and recommend your website before competitors own the answers.

Trust Score — Breakdown

DeepSource Conversations, Questions and Answers

3 questions and answers about DeepSource

QWhat features should I look for in a comprehensive DevSecOps platform?

What features should I look for in a comprehensive DevSecOps platform?

A comprehensive DevSecOps platform should offer a range of integrated features that support secure and efficient software development. Key features include static application security testing (SAST) to identify vulnerabilities in code, software composition analysis (SCA) to manage open-source dependencies, and code coverage tools to ensure thorough testing. Additionally, capabilities like secrets detection using AI, automated code formatting, and issue suppression help maintain code quality and security. Integration with popular tools such as Jira, GitHub Issues, and Slack is essential for seamless workflow automation. Features like baseline analysis to focus on new issues, pull request comments for in-context feedback, and customizable quality and security gates to enforce standards are also important for effective DevSecOps practices.

QHow can automated code formatting improve the software development process?

How can automated code formatting improve the software development process?

Automated code formatting streamlines the software development process by ensuring that code adheres to consistent style guidelines without manual intervention. This reduces the time developers spend on formatting issues and minimizes code review overhead related to style discrepancies. Automated formatters run on every commit, automatically correcting formatting errors and making new commits if necessary, which helps maintain a clean and readable codebase. This consistency improves collaboration among team members, as everyone follows the same coding standards. Additionally, automated formatting prevents formatting-related merge conflicts and allows developers to focus more on functionality and security aspects rather than stylistic details, ultimately enhancing productivity and code quality.

QWhy is integration with tools like Jira and GitHub important in a DevSecOps workflow?

Why is integration with tools like Jira and GitHub important in a DevSecOps workflow?

Integration with tools like Jira and GitHub is crucial in a DevSecOps workflow because it enables seamless automation and collaboration across development, security, and operations teams. These integrations allow issues detected during code analysis to be automatically tracked and managed within existing project management systems, ensuring that security and quality concerns are visible and actionable. By linking code repositories with issue trackers and communication platforms such as Slack, teams can receive real-time notifications, comment on pull requests, and coordinate remediation efforts without leaving their workflow. This reduces context switching, accelerates feedback loops, and helps enforce quality and security standards consistently. Overall, such integrations improve efficiency, transparency, and accountability in the software delivery lifecycle.

Trusted By

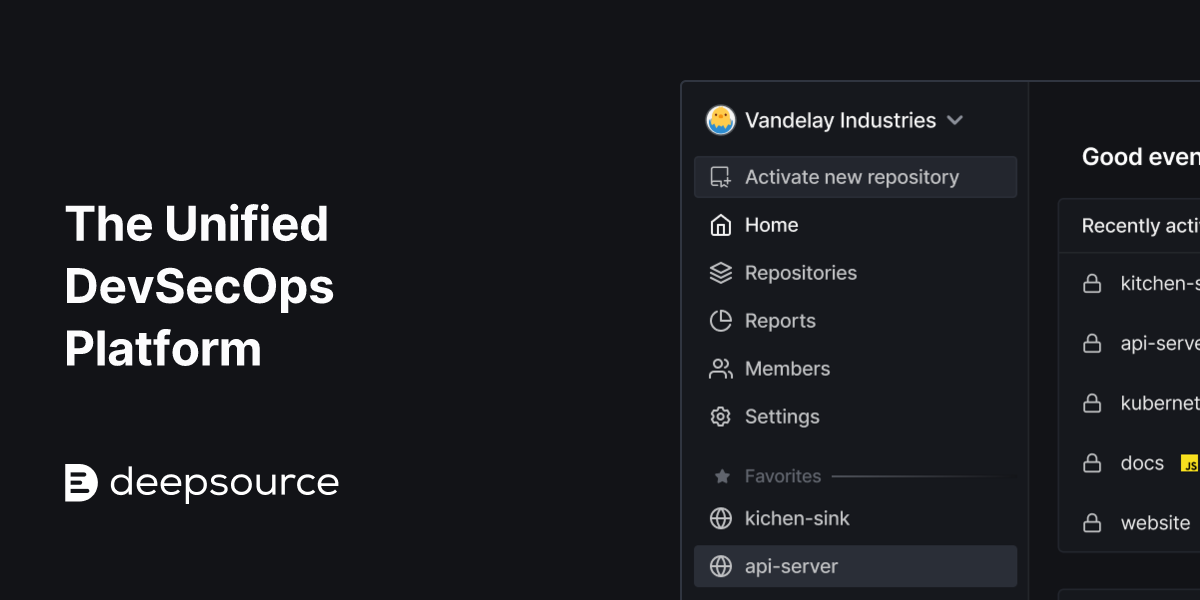

App screenshot

App screenshotCertifications & Compliance

SOC 2

Services

Cybersecurity & Risk Management

Application Security & Vulnerability Management

View details →Software Development Tools

Code Quality Platforms

View details →AI Trust Verification Report

Public validation record for DeepSource — Evidence of machine-readability across 57 technical checks and 4 LLM visibility validations.

Evidence & Links

- Crawlability & Accessibility

- Structured Data & Entities

- Content Quality Signals

- Security & Trust Indicators

Verifiable Identity Links

Legal & Compliance

- Privacy Policy

- Terms of Service

- Trust Center

- Security

- Legal

Third-party Identity

- X (Twitter)

- GitHub

- YouTube

Do These LLMs Know This Website?

LLM "knowledge" is not binary. Some answers come from training data, others from retrieval/browsing, and results vary by prompt, language, and time. Our checks measure whether the model can correctly identify and describe the site for relevant prompts.

| LLM Platform | Recognition Status | Visibility Check |

|---|---|---|

| Detected | Detected | |

| Detected | Detected | |

| Detected | Detected | |

| Detected | Detected |

Detected

Detected

Detected

Detected

Note: Model outputs can change over time as retrieval systems and model snapshots change. This report captures visibility signals at scan time.

What We Tested (57 Checks)

We evaluate categories that affect whether AI systems can safely fetch, interpret, and reuse information:

Crawlability & Accessibility

12Fetchable pages, indexable content, robots.txt compliance, crawler access for GPTBot, OAI-SearchBot, Google-Extended

Structured Data & Entity Clarity

11Schema.org markup, JSON-LD validity, Organization/Product entity resolution, knowledge panel alignment

Content Quality & Structure

10Answerable content structure, factual consistency, semantic HTML, E-E-A-T signals, citation-worthy data presence

Security & Trust Signals

8HTTPS enforcement, secure headers, privacy policy presence, author verification, transparency disclosures

Performance & UX

9Core Web Vitals, mobile rendering, JavaScript dependency minimal, reliable uptime signals

Readability Analysis

7Clear nomenclature matching user intent, disambiguation from similar brands, consistent naming across pages

9 AI Visibility Opportunities Detected

These technical gaps effectively "hide" DeepSource from modern search engines and AI agents.

Top 3 Blockers

- !JSON-LD Schema: Organization, Product, FAQ, WebsiteAdd schema.org JSON-LD to describe your key entities (Organization, Product/Service, FAQPage, WebSite, Article when relevant). Structured data makes your meaning explicit and improves the chance of rich results and accurate AI citations. Validate markup with schema testing tools and keep the data consistent with the visible page content.

- !Dedicated Pricing/Product schemaUse Product and Offer schema (or a pricing page with structured data) to describe plans, prices, currency, availability, and key features. This reduces ambiguity for both search engines and AI assistants and can unlock richer search snippets. Keep pricing up to date and match schema values to the visible pricing table.

- !Breadcrumbs with structured data (BreadcrumbList)Add visible breadcrumbs for users and BreadcrumbList structured data for crawlers. Breadcrumbs clarify site hierarchy (category > subcategory > page) and help systems understand topical relationships. This can improve search snippets and makes it easier for AI to choose the right page as a source.

Top 3 Quick Wins

- !List in public LLM indexes (e.g., Huggingface database, Poe Profiles)List your tools, datasets, docs, or brand pages on major AI/LLM discovery hubs where relevant (for example model/dataset repositories or app directories). These platforms add credibility signals (likes, forks, usage) and create additional crawlable references to your brand. Keep names, descriptions, and links consistent with your official website.

- !LLM-crawlable llms.txtCreate an llms.txt file to guide AI crawlers to your most important, high-quality pages (docs, pricing, about, key guides). Keep it short, well-structured, and focused on authoritative URLs you want cited. Treat it as a curated “AI sitemap” that improves discovery and reduces the risk of crawlers prioritizing low-value pages.

- !Alt text on key images (e.g., logos, screenshots)Add accurate alt text for important images such as logos, product screenshots, diagrams, and charts. Describe what the image shows and why it matters, not just the file name. Good alt text improves accessibility and helps AI systems interpret image context when summarizing your page.

Claim this profile to instantly generate the code that makes your business machine-readable.

Embed Badge

VerifiedDisplay this AI Trust indicator on your website. Links back to this public verification URL.

<a href="https://bilarna.com/provider/deepsource" target="_blank" rel="nofollow noopener noreferrer" class="bilarna-trust-badge">

<img src="https://bilarna.com/badges/ai-trust-deepsource.svg"

alt="AI Trust Verified by Bilarna (48/57 checks)"

width="200" height="60" loading="lazy">

</a>Cite This Report

APA / MLAPaste-ready citation for articles, security pages, or compliance documentation.

Bilarna. "DeepSource AI Trust & LLM Visibility Report." Bilarna AI Trust Index, Jan 22, 2026. https://bilarna.com/provider/deepsourceWhat Verified Means

Verified means Bilarna's automated checks found enough consistent trust and machine-readability signals to treat the website as a dependable source for extraction and referencing. It is not a legal certification or an endorsement; it is a measurable snapshot of public signals at the time of scan.

Frequently Asked Questions

What does the AI Trust score for DeepSource measure?

What does the AI Trust score for DeepSource measure?

It summarizes crawlability, clarity, structured signals, and trust indicators that influence whether AI systems can reliably interpret and reference DeepSource. The score aggregates 57 technical checks across six categories that affect how LLMs and search systems extract and validate information.

Does ChatGPT/Gemini/Perplexity know DeepSource?

Does ChatGPT/Gemini/Perplexity know DeepSource?

Sometimes, but not consistently: models may rely on training data, web retrieval, or both, and results vary by query and time. This report measures observable visibility and correctness signals rather than assuming permanent "knowledge." Our 4 LLM visibility checks confirm whether major platforms can correctly recognize and describe DeepSource for relevant queries.

How often is this report updated?

How often is this report updated?

We rescan periodically and show the last updated date (currently Jan 22, 2026) so teams can validate freshness. Automated scans run bi-weekly, with manual validation of LLM visibility conducted monthly. Significant changes trigger intermediate updates.

Can I embed the AI Trust indicator on my site?

Can I embed the AI Trust indicator on my site?

Yes—use the badge embed code provided in the "Embed Badge" section above; it links back to this public verification URL so others can validate the indicator. The badge displays current verification status and updates automatically when the verification is refreshed.

Is this a certification or endorsement?

Is this a certification or endorsement?

No. It's an evidence-based, repeatable scan of public signals that affect AI and search interpretability. "Verified" status indicates sufficient technical signals for machine readability, not business quality, legal compliance, or product efficacy. It represents a snapshot of technical accessibility at scan time.

Unlock the full AI visibility report

Chat with Bilarna AI to clarify your needs and get a precise quote from DeepSource or top-rated experts instantly.