Machine-Ready Briefs

AI translates unstructured needs into a technical, machine-ready project request.

We use cookies to improve your experience and analyze site traffic. You can accept all cookies or only essential ones.

Stop browsing static lists. Tell Bilarna your specific needs. Our AI translates your words into a structured, machine-ready request and instantly routes it to verified AI Model Fine-tuning experts for accurate quotes.

AI translates unstructured needs into a technical, machine-ready project request.

Compare providers using verified AI Trust Scores & structured capability data.

Skip the cold outreach. Request quotes, book demos, and negotiate directly in chat.

Filter results by specific constraints, budget limits, and integration requirements.

Eliminate risk with our 57-point AI safety check on every provider.

Verified companies you can talk to directly

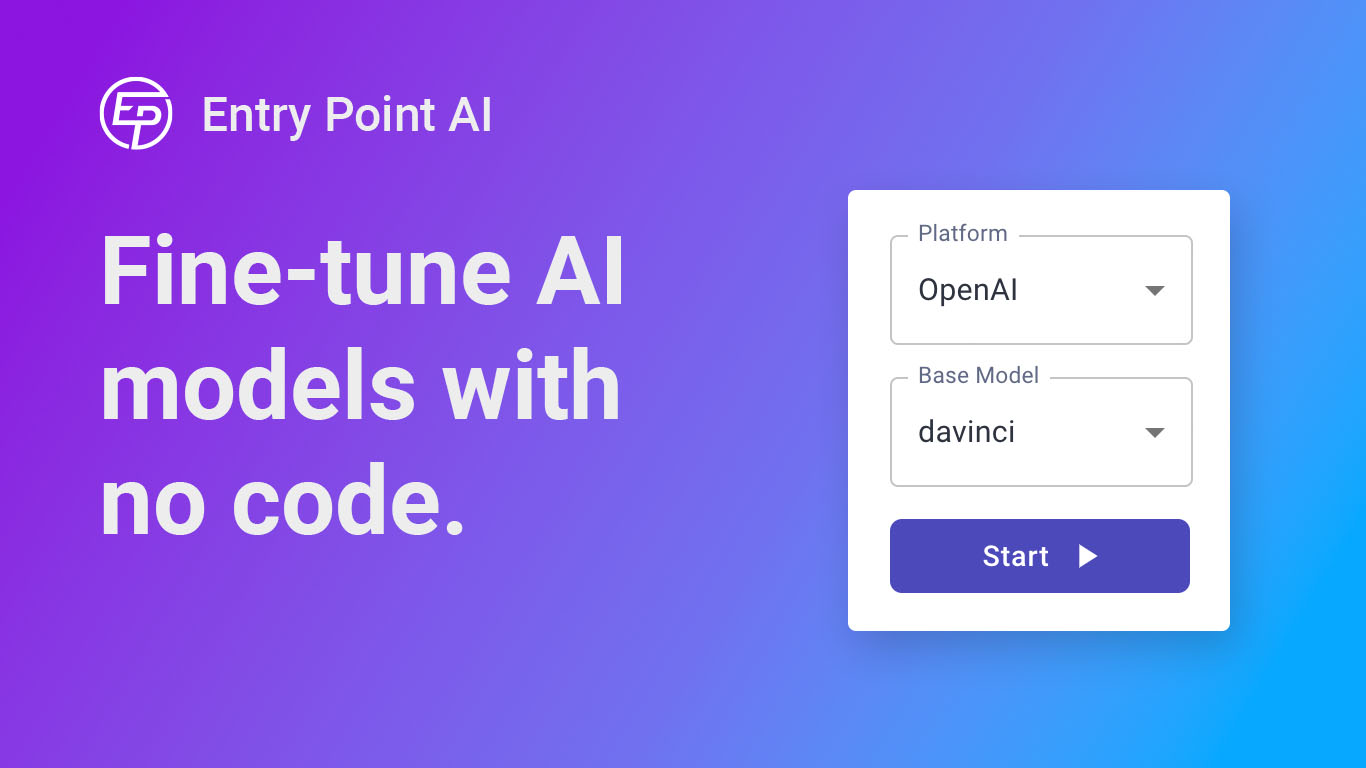

Train, manage, and evaluate custom large language models (LLMs) fast and efficiently on Entry Point AI with no code required.

Products Data Labeling LLM Fine-Tuning Intelligent Document Processing Features Information Extraction Content Generation Agentic Customer Support LLM Evaluation Synthetic Data Generation Learn Blog LLM Fine-tuning Guide Github LLM Repository UBIAI AI Tutorials White Papers Workshops & Webinars Join Discord Community C

Run a free AEO + signal audit for your domain.

AI Answer Engine Optimization (AEO)

List once. Convert intent from live AI conversations without heavy integration.

AI model fine-tuning is the process of adapting a pre-trained foundational model to excel at a specific task or understand a unique dataset. This specialized training involves exposing the model to new, domain-specific data to adjust its internal parameters. It enables businesses to achieve higher accuracy, improve relevance, and reduce development costs compared to building a model from scratch.

Identify and procure a suitable pre-trained large language model or vision model that aligns with the target task's fundamental requirements.

Curate and label a high-quality, task-specific dataset that will teach the model the nuances and patterns of the new domain.

Run iterative training cycles on the specialized data, then rigorously evaluate the model's performance on a separate validation set to ensure optimal results.

Fine-tune language models on past support tickets and company knowledge bases to provide accurate, brand-aligned automated responses.

Adapt models to extract key terms, clauses, and sentiment from complex contracts, earnings reports, and regulatory filings.

Specialize vision models on annotated X-rays or MRIs to assist radiologists in detecting anomalies and specific conditions with greater precision.

Train models on platform-specific policy violations to automatically flag harmful text, images, and video content at scale.

Fine-tune models on sensor data from industrial equipment to forecast failures and recommend proactive maintenance schedules.

Bilarna ensures you connect with trustworthy AI model fine-tuning specialists. Every provider on our platform is rigorously evaluated using a proprietary 57-point AI Trust Score, assessing technical expertise, project reliability, data security compliance, and proven client satisfaction. This transparent scoring allows for confident, informed comparisons.

Training from scratch requires massive datasets, immense computational resources, and significant time to build a model's foundational knowledge. Fine-tuning starts with a pre-trained model that already understands general patterns, requiring less data and compute to specialize it for a specific, narrower task efficiently.

Data requirements vary by model complexity and task specificity, but effective fine-tuning often needs hundreds to several thousand high-quality, curated examples. The key is data relevance and quality, not just volume, as clean, well-labeled domain-specific data yields the best performance gains.

Key risks include catastrophic forgetting, where the model loses its general knowledge, and overfitting, where it performs well only on the training data. Challenges also involve managing computational costs, ensuring data privacy, and maintaining model interpretability after the specialized training process.

Success is measured using metrics relevant to the specific task, such as accuracy, F1 score, or BLEU score for language tasks. It is critical to evaluate the model on a completely separate validation dataset it hasn't seen during training to get a true measure of its generalization and real-world performance.

Most modern, well-documented open-source models (like those from Hugging Face) and APIs from major vendors are designed to be fine-tuned. However, licensing terms, technical architecture, and available tooling must be checked to ensure the specific base model is suitable and legally permissible for your intended commercial application.

Microschools are independently owned and operated, which means they are not required to follow a specific curriculum or teaching model. Each microschool is designed and led by its educator-founder, who selects the curriculum, learning approach, and instructional methods that best serve their students' needs. This flexibility allows microschools to tailor education to their community and student population, fostering innovative and personalized learning experiences. The common thread among microschools is a commitment to small learning environments, strong relationships, and student-centered education rather than adherence to a standardized program.

Yes, AI marketing platforms can generate professional model photoshoots without hiring models or studios. 1. Upload your product images or specify fashion items. 2. Choose model types, poses, and settings from AI options. 3. Customize styles to align with your brand identity. 4. Generate high-quality model photoshoots instantly. 5. Use the images for fashion marketing, e-commerce, or virtual try-ons without additional costs or logistics.

Software developers for a dedicated team are rigorously vetted through a multi-stage process focusing on technical skills, problem-solving, and cultural fit. The process typically begins with a review of the candidate's background in competitive programming or relevant open-source contributions. This is followed by a series of technically demanding written tasks or coding challenges, often compiled and assessed by senior technical leadership such as a CTO. Candidates who pass then undergo one-on-one technical interviews to evaluate their depth of knowledge, architectural thinking, and proficiency in specific languages or frameworks. A final interview often assesses soft skills, communication, and alignment with client project needs. This thorough vetting ensures that only engineers who demonstrate exceptional coding standards, ethical professionalism, and the ability to integrate into client workflows are selected for dedicated client teams.

A foundation model improves accuracy in time series predictions by leveraging its training on a wide variety of datasets, which allows it to learn generalized patterns and relationships across different domains. This broad learning helps the model to better understand complex temporal dynamics, including trends, seasonality, and irregular fluctuations. Additionally, foundation models often use advanced neural network architectures and transfer learning techniques, enabling them to adapt quickly to new time series data with limited additional training. As a result, these models can provide more reliable and precise forecasts compared to traditional, domain-specific models.

Administrators can manage AI model access and security by using centralized controls. 1. Set up Single Sign-On (SSO) with providers like Okta, Microsoft, or Google for secure authentication. 2. Use an admin dashboard to control which AI models team members can access. 3. Define policies to regulate usage and ensure compliance. 4. Connect data sources securely to enhance AI capabilities while maintaining enterprise security standards.

AI datasets play a crucial role in enhancing both the safety and capabilities of machine learning models. By providing diverse, high-quality, and well-annotated data, these datasets help models learn more accurately and generalize better to real-world scenarios. This reduces the risk of errors, biases, and unintended behaviors. Additionally, carefully curated datasets can include examples that test model robustness and ethical considerations, ensuring safer deployment. Collaborations with AI labs often focus on building such datasets to address specific challenges, ultimately leading to smarter and more reliable AI systems.

AI development platforms often provide built-in monitoring and evaluation tools designed specifically for AI workflows. These platforms capture detailed traces of AI model executions, allowing teams to replay and analyze each step. Continuous evaluation features enable automatic assessment of model outputs as new data arrives, ensuring ongoing visibility into accuracy and performance. Segmented analytics help teams understand how models perform across different prompts, topics, or customer segments. Additionally, customizable evaluation suites and support for preset and custom evaluators allow teams to tailor assessments to their specific needs, facilitating rapid iteration and improvement.

AI models can be fine-tuned for specific industries by retraining them on domain-specific data and optimizing parameters for relevant tasks, which enhances accuracy and relevance for niche applications. This process begins with collecting and annotating data from the target vertical, such as medical records for healthcare, financial transactions for banking, or customer behavior for retail. The pre-trained model is then further trained on this curated dataset to adapt its knowledge, improving performance for use cases like diagnostic assistance, fraud detection, or personalized recommendations. Fine-tuning requires expertise in machine learning techniques, including hyperparameter adjustment and validation against industry benchmarks to ensure reliability. It enables businesses to leverage advanced AI capabilities without building models from scratch, saving time and resources while achieving customized performance that aligns with operational requirements and regulatory standards.

Use an AI sommelier model to enhance B2B wine sales by providing expert wine recommendations and personalized customer interactions. Steps: 1. Integrate the AI sommelier into your sales platform. 2. Train the model with extensive wine knowledge to assist wholesale clients. 3. Use AI-driven insights to suggest wines based on customer preferences and market trends. 4. Enable real-time support for sales teams and customers to increase engagement. 5. Analyze sales data to continuously optimize wine offerings and recommendations.

Companies can access conversational audio datasets through platforms that offer licensed and ethically sourced audio data. Typically, they start by discussing their specific use case, including requirements such as hours of data, languages, and scenarios. They can select from existing datasets or request custom annotations. Samples are usually provided within 48 hours for quality review and testing in their own training pipelines. Full datasets can then be accessed via API or cloud storage services like S3, enabling immediate use for AI model training and scaling annotation efforts as needed.